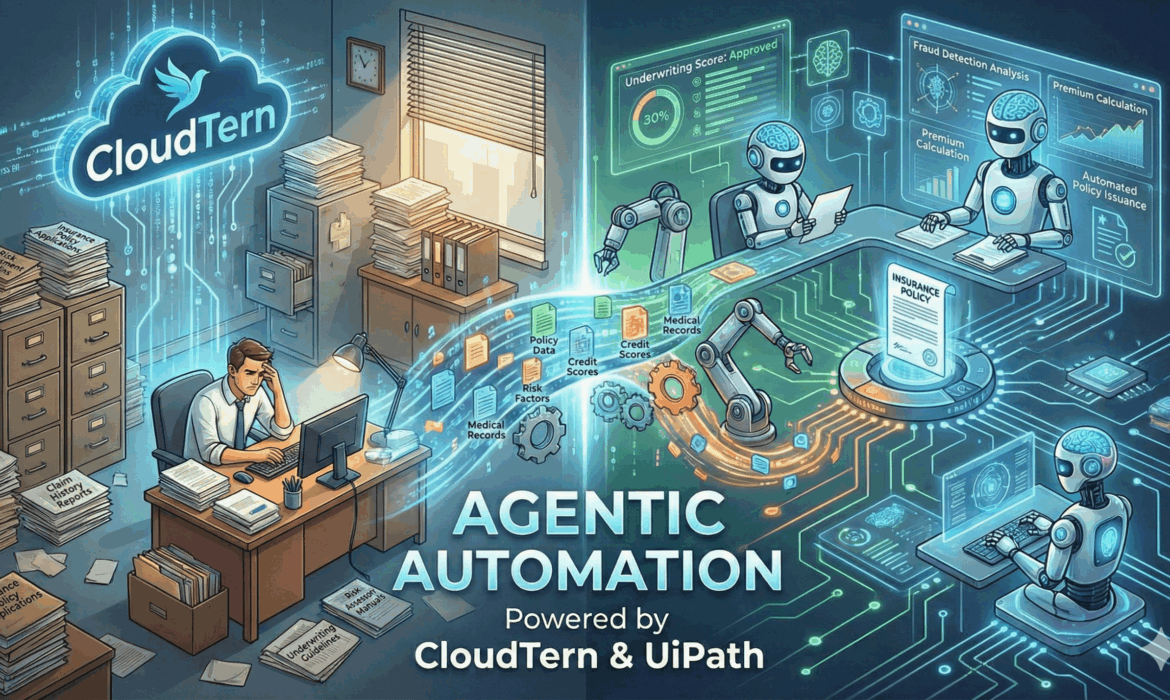

Reinventing Insurance Underwriting with Agentic Automation Powered by CloudTern & UiPath

The insurance industry is undergoing its most significant transformation in decades. Rising customer expectations, regulatory complexity, and massive volumes of unstructured data have pushed underwriting teams to their limits. Traditional RPA and rule-based automation helped but today’s underwriting landscape demands something more adaptive, more intelligent, and more autonomous.

Top 10 AI Workflow Automation Platforms in 2025

Discover the top AI workflow automation platforms, categorized for small businesses, mid-sized companies, and large enterprises, to streamline your operations.

Transforming Talent Acquisition with Gen AI: A Competitive Advantage for Modern Organizations

In today’s competitive job market, winning top talent means recruiting faster, smarter, and at scale. Traditional systems fall short in delivering personalized, data-driven hiring. Generative AI is revolutionizing talent acquisition by using advanced AI models, especially large language models, to generate, understand, and optimize recruitment processes.

Unlocking Efficiency: How AI Can Eliminate Workflow Bottlenecks in Supply Chain

From unexpected delays to inaccurate forecasts, bottlenecks in supply chain workflows often arise not from major breakdowns, but from small, unnoticed inefficiencies compounded over time. The result? Slower delivery cycles, rising costs, and missed opportunities.

Mastering AI in Supply Chains: A Guide to Successful Implementation

Artificial Intelligence has become a vital component of supply chain management, driving transformation. From predictive analytics to automated inventory optimization, AI is revolutionizing the way businesses forecast, plan, and deliver. But successful AI implementation isn’t a one-size-fits-all solution; it requires careful preparation and a strong operational focus.

Mastering Supply Chain Efficiency: The Strategic Edge of AI

Supply chain management has always been a complex operation, balancing multiple moving parts across procurement, manufacturing, logistics, and delivery. Traditionally, businesses have struggled with challenges like limited visibility across the supply chain, inaccurate demand forecasting, delayed responses to disruptions, rising operational costs, and inefficient manual processes.

Harnessing AI: A Strategic Advantage For Today’s Business Leaders

In today’s fast-paced digital economy, artificial intelligence is no longer a futuristic concept – it’s a present-day differentiator. Forward-thinking leaders are increasingly viewing AI not just as a tool, but as a strategic imperative that drives efficiency & innovation.

AI-Powered Workflow Automation For Healthcare Efficiency

In an era where healthcare organizations are expected to deliver faster, more personalized care with tighter budgets & fewer resources, operational efficiency is no longer a luxury; it’s a necessity.

Automating Customer Service: The Role of AI in Modern Contact Center

Imagine a world where customer service inquiries are resolved instantly and available 24/7, eliminating the frustration of waiting on hold. As customer expectations grow in today’s fast-paced digital landscape, organizations are increasingly looking for innovative solutions to enhance efficiency and satisfaction. Automating customer service has emerged as a key strategy in this evolution, with artificial intelligence (AI) at the forefront of modern contact centers.

AI technologies, including chatbots, natural language processing, and predictive analytics, empower businesses to streamline their operations, reduce response times, and deliver personalized experiences. By adopting these AI-driven solutions, companies are transforming the way they interact with customers and setting new standards for service excellence. This shift not only drives greater customer loyalty but also enhances overall operational effectiveness, ensuring that businesses remain competitive in an ever-changing market.

The Evolution of Contact Centers: From Manual to AI-Driven Operations

The transformation of contact centers has shifted from traditional manual processes to sophisticated AI-driven systems. In the past, human agents handled customer inquiries over the phone, often resulting in long wait times and inconsistent service due to high call volumes. While automatic call distribution and interactive voice response (IVR) technologies improved efficiency, they still relied heavily on human involvement, demonstrating the need for greater automation in customer service.

Currently, AI technologies have revolutionized contact centers by significantly enhancing customer experience and operational efficiency. By utilizing natural language processing, predictive analytics, and machine learning, contact centers can automate routine inquiries and provide personalized assistance around the clock through AI chatbots and virtual assistants. This shift allows human agents to focus on more complex issues, streamlining processes and improving response times, ultimately leading to higher customer satisfaction. As AI continues to evolve, contact centers are set to further enhance their capabilities, helping organizations succeed in a competitive environment.

Key AI Technologies Powering Modern Contact Centers

Modern contact centers are transforming customer service with AI-driven technologies. Natural Language Processing (NLP) empowers chatbots and virtual assistants to understand and engage in human-like conversations. These intelligent tools efficiently handle inquiries, provide instant information, and complete transactions without human intervention. By reducing wait times and automating routine tasks, AI enhances speed and accuracy in customer support. Voice and text interactions feel seamless, ensuring a smooth and engaging experience. As a result, businesses can deliver faster, more responsive service while optimizing resources.

Predictive analytics takes customer interactions to the next level by forecasting needs before they arise. By analyzing past data, AI helps agents proactively address issues like billing concerns or service disruptions. This foresight enables personalized interactions that improve customer satisfaction and loyalty. Real-time speech recognition and sentiment analysis further enhance quality assurance and agent performance. These AI capabilities refine training, ensuring teams are better equipped to handle complex requests. As AI evolves, it continues to revolutionize contact centers, making them more agile, intelligent, and customer-focused.

The Advantages of AI in Contact Centers: Cost Savings and Scalability

AI adoption in contact centers boosts cost efficiency and scalability by automating tasks like customer inquiries, transactions, and scheduling. This reduces workforce needs, cutting operational costs while optimizing workflow through intelligent call routing.

AI also enables seamless scaling to match fluctuating demand. During peak periods, AI chatbots efficiently manage surges, ensuring fast response times and consistent service quality. This adaptability enhances customer satisfaction and loyalty while helping businesses stay competitive, improve productivity, and respond swiftly to market changes.

Boosting Agent Productivity and Reducing Workload with AI

AI is revolutionizing workplace productivity by automating routine tasks like data entry, customer inquiries, and report generation. This allows agents to focus on complex issues requiring critical thinking and creativity, improving efficiency and service quality. AI chatbots handle basic queries, freeing up agents for more meaningful work that adds value to their organizations.

Additionally, AI-driven analytics provide real-time insights, helping agents make informed decisions and personalize customer interactions. By streamlining workloads and enhancing outcomes, AI empowers employees to maximize their skills while reducing repetitive tasks. As AI advances, it continues to create a more efficient, dynamic, and responsive workplace.

The Future of AI in Contact Centers: Trends and Innovations

AI is poised to transform contact centers by introducing chatbots and voice assistants that facilitate immediate automated responses. This enhances user experiences while allowing human agents to concentrate on complex issues, providing a more seamless service.

Moreover, AI advancements will improve operational efficiency in contact centers, optimizing processes and promoting better resource management and cost savings. Continuous innovations in AI technology will keep contact centers adaptable to customer needs, enabling businesses to enhance service capabilities and maintain a competitive edge in a fast-paced market.

Securing Tomorrow: The Key Role of Cybersecurity in Telecommunications

Understanding the Cyber Threat Landscape in Telecom

The telecommunications industry is increasingly becoming a prime target for cyber threats due to its critical role in global connectivity. As telecom networks evolve with new technologies, such as 5G and the Internet of Things (IoT), they face heightened vulnerabilities. Cybercriminals exploit these weaknesses to launch attacks, disrupt services, and compromise sensitive data, making it essential for telecom companies to understand the cyber threat landscape.

To safeguard against these risks, telecom providers must adopt robust cybersecurity measures and stay updated on emerging threats. This includes investing in advanced security solutions, conducting regular threat assessments, and fostering a culture of cybersecurity awareness among employees. By prioritizing cybersecurity, telecom companies can protect their networks and maintain customer trust, ensuring secure and reliable communication services.

Key Cybersecurity Challenges for Telecommunications Providers

- Increasing Sophistication of Cyber Attacks: Telecommunications providers face increasingly sophisticated cyber threats, such as advanced persistent threats (APTs) and ransomware, that exploit vulnerabilities in their infrastructures. Given their crucial role in global communication, these networks are prime targets for cybercriminals. Providers must invest in advanced threat detection and establish robust incident response plans to quickly identify and mitigate attacks.

- Complexity of Telecommunications Networks: The complex nature of telecommunications networks, including legacy systems and modern platforms, creates security challenges. The rapid growth of Internet of Things (IoT) devices further complicates security, as many lack adequate protections. Providers need a unified cybersecurity strategy that includes regular vulnerability assessments and network segmentation to effectively manage risks across all components.

- Regulatory Compliance: Telecommunications companies must navigate a complex regulatory environment, adhering to strict regulations such as GDPR and CCPA. Non-compliance can lead to hefty fines and reputational harm. As regulations evolve in response to new threats, telecom providers must invest in compliance-focused cybersecurity frameworks and ongoing employee training to ensure alignment with current standards.

- Insider Threats and Human Error: Insider threats and human error pose significant security risks to telecommunications networks. Employees can unintentionally expose systems to breaches or engage in malicious activities. Providers should prioritize employee training on cybersecurity best practices to mitigate these risks and implement strict access controls and monitoring systems to protect critical assets.

Innovative Cybersecurity Solutions for the Telecommunications Industry

Amid rising cyber threats, the telecommunications industry is adopting innovative solutions to bolster cybersecurity and protect sensitive information. A key advancement is the integration of artificial intelligence (AI) and machine learning (ML) into cybersecurity protocols. These technologies allow telecom companies to analyze extensive network data in real time, identifying potential threats and anomalies that might otherwise go undetected. By utilizing predictive analytics, AI systems can proactively spot vulnerabilities and trigger automated responses, effectively mitigating risks before they escalate into serious incidents. This proactive approach is vital as cyber threats become more sophisticated.

Additionally, the shift to a zero-trust security model is revolutionizing how telecom companies secure their networks. Unlike traditional frameworks that often assume internal traffic is safe, the zero-trust model operates on the principle of “never trust, always verify.” This approach requires strict authentication for every user and device accessing network resources, regardless of their location. Continuous monitoring and multifactor authentication help telecom providers significantly reduce the risk of data breaches. By implementing this model, companies enhance their security resilience, ensuring they can better withstand complex cyber threats while maintaining customer trust.

Moreover, collaboration within the industry is crucial for strengthening cybersecurity. Telecom companies are forming partnerships with cybersecurity firms to share intelligence and best practices, leading to a deeper understanding of the threat landscape. These collaborations aid in developing tailored solutions addressing specific sector vulnerabilities. By embracing innovative technologies and fostering a culture of collaboration, telecom providers can stay ahead of cyber adversaries, effectively protecting their infrastructure and customer data in a rapidly evolving digital environment.

Collaboration and Information Sharing in Telecom

Collaboration and information sharing in the telecom sector are essential to address the rapidly evolving challenges posed by cyber threats, technological advancements, and regulatory requirements. By pooling resources and insights, telecom companies can strengthen their defenses against cyberattacks, ensuring more resilient networks and secure communications. This collective approach helps in identifying vulnerabilities and developing best practices to counter threats effectively, benefiting the entire ecosystem. Initiatives like sharing threat intelligence and collaborating on industry standards allow companies to stay ahead of attackers and comply with regulations more efficiently.

Furthermore, partnerships between telecom operators, technology providers, and regulatory bodies foster innovation while maintaining security and operational efficiency. For example, collaboration on advancements in 5G and AI integration ensures that new technologies are deployed securely and sustainably. Open dialogue between stakeholders, including public and private entities, enables a more unified approach to solving industry-wide challenges such as data privacy, network optimization, and global connectivity. By embracing a culture of collaboration and transparency, the telecom sector can achieve robust growth while safeguarding its critical infrastructure.

Future-Proofing Telecom Cybersecurity Strategies

To future-proof cybersecurity strategies, telecommunications providers must address the expanding threats associated with 5G technology and the widespread use of Internet of Things (IoT) devices. These developments have broadened the attack surface, making telecom networks more appealing to cybercriminals. To combat these challenges, companies should implement adaptable security measures, leveraging advanced technologies like artificial intelligence (AI) and machine learning (ML) for enhanced threat detection and real-time network data analysis. This proactive approach facilitates early identification of anomalies before they escalate into serious breaches.

Moreover, cultivating a culture of cybersecurity awareness and compliance is essential. Continuous training for all employees helps minimize human error, a frequent contributor to security incidents. Telecom providers must also stay agile in response to regulatory changes by adopting flexible compliance frameworks. Collaborating with industry partners and cybersecurity experts can further bolster resilience through shared insights and best practices. By fostering a security-focused mindset and maintaining adaptability in their strategies, telecommunications companies can strengthen network protection and preserve customer trust in an increasingly interconnected world.